ERFPhysBCFunct_u Class Reference

#include <ERF_PhysBCFunct.H>

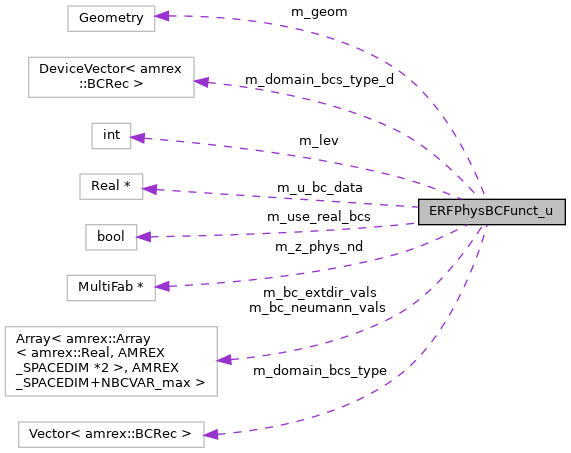

Collaboration diagram for ERFPhysBCFunct_u:

Public Member Functions | |

| ERFPhysBCFunct_u (const int lev, const amrex::Geometry &geom, const amrex::Vector< amrex::BCRec > &domain_bcs_type, const amrex::Gpu::DeviceVector< amrex::BCRec > &domain_bcs_type_d, amrex::Array< amrex::Array< amrex::Real, AMREX_SPACEDIM *2 >, AMREX_SPACEDIM+NBCVAR_max > bc_extdir_vals, amrex::Array< amrex::Array< amrex::Real, AMREX_SPACEDIM *2 >, AMREX_SPACEDIM+NBCVAR_max > bc_neumann_vals, std::unique_ptr< amrex::MultiFab > &z_phys_nd, const bool use_real_bcs, amrex::Real *u_bc_data) | |

| ~ERFPhysBCFunct_u () | |

| void | operator() (amrex::MultiFab &mf, amrex::MultiFab &xvel, amrex::MultiFab &yvel, amrex::IntVect const &nghost, const amrex::Real time, int bccomp, bool do_fb) |

| void | impose_lateral_xvel_bcs (const amrex::Array4< amrex::Real > &dest_arr, const amrex::Array4< amrex::Real const > &xvel_arr, const amrex::Array4< amrex::Real const > &yvel_arr, const amrex::Box &bx, const amrex::Box &domain, int bccomp, const amrex::Real time) |

| void | impose_vertical_xvel_bcs (const amrex::Array4< amrex::Real > &dest_arr, const amrex::Box &bx, const amrex::Box &domain, const amrex::Array4< amrex::Real const > &z_nd, const amrex::GpuArray< amrex::Real, AMREX_SPACEDIM > dxInv, int bccomp, const amrex::Real time) |

Private Attributes | |

| int | m_lev |

| amrex::Geometry | m_geom |

| amrex::Vector< amrex::BCRec > | m_domain_bcs_type |

| amrex::Gpu::DeviceVector< amrex::BCRec > | m_domain_bcs_type_d |

| amrex::Array< amrex::Array< amrex::Real, AMREX_SPACEDIM *2 >, AMREX_SPACEDIM+NBCVAR_max > | m_bc_extdir_vals |

| amrex::Array< amrex::Array< amrex::Real, AMREX_SPACEDIM *2 >, AMREX_SPACEDIM+NBCVAR_max > | m_bc_neumann_vals |

| amrex::MultiFab * | m_z_phys_nd |

| bool | m_use_real_bcs |

| amrex::Real * | m_u_bc_data |

Constructor & Destructor Documentation

◆ ERFPhysBCFunct_u()

|

inline |

amrex::Array< amrex::Array< amrex::Real, AMREX_SPACEDIM *2 >, AMREX_SPACEDIM+NBCVAR_max > m_bc_extdir_vals

Definition: ERF_PhysBCFunct.H:137

amrex::Gpu::DeviceVector< amrex::BCRec > m_domain_bcs_type_d

Definition: ERF_PhysBCFunct.H:136

amrex::Array< amrex::Array< amrex::Real, AMREX_SPACEDIM *2 >, AMREX_SPACEDIM+NBCVAR_max > m_bc_neumann_vals

Definition: ERF_PhysBCFunct.H:138

amrex::Vector< amrex::BCRec > m_domain_bcs_type

Definition: ERF_PhysBCFunct.H:135

◆ ~ERFPhysBCFunct_u()

Member Function Documentation

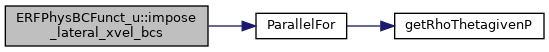

◆ impose_lateral_xvel_bcs()

| void ERFPhysBCFunct_u::impose_lateral_xvel_bcs | ( | const amrex::Array4< amrex::Real > & | dest_arr, |

| const amrex::Array4< amrex::Real const > & | xvel_arr, | ||

| const amrex::Array4< amrex::Real const > & | yvel_arr, | ||

| const amrex::Box & | bx, | ||

| const amrex::Box & | domain, | ||

| int | bccomp, | ||

| const amrex::Real | time | ||

| ) |

ParallelFor(bx, [=] AMREX_GPU_DEVICE(int i, int j, int k) noexcept { const Real *dx=geomdata.CellSize();const Real x=(i+myhalf) *dx[0];const Real y=(j+myhalf) *dx[1];const Real Omg=erf_vortex_Gaussian(x, y, xc, yc, R, beta, sigma);const Real deltaT=-(gamma - one)/(two *sigma *sigma) *Omg *Omg;const Real rho_norm=std::pow(one+deltaT, inv_gm1);const Real T=(one+deltaT) *T_inf;const Real p=std::pow(rho_norm, Gamma)/Gamma *rho_0 *a_inf *a_inf;const Real rho_theta=rho_0 *rho_norm *(T *std::pow(p_0/p, rdOcp));state_pert(i, j, k, RhoTheta_comp)=rho_theta - getRhoThetagivenP(p_hse(i, j, k));const Real r2d_xy=std::sqrt((x-xc) *(x-xc)+(y-yc) *(y-yc));state_pert(i, j, k, RhoScalar_comp)=fourth *(one+std::cos(PI *std::min(r2d_xy, R)/R));})

void impose_lateral_xvel_bcs(const amrex::Array4< amrex::Real > &dest_arr, const amrex::Array4< amrex::Real const > &xvel_arr, const amrex::Array4< amrex::Real const > &yvel_arr, const amrex::Box &bx, const amrex::Box &domain, int bccomp, const amrex::Real time)

Definition: ERF_BoundaryConditionsXvel.cpp:15

Here is the call graph for this function:

◆ impose_vertical_xvel_bcs()

| void ERFPhysBCFunct_u::impose_vertical_xvel_bcs | ( | const amrex::Array4< amrex::Real > & | dest_arr, |

| const amrex::Box & | bx, | ||

| const amrex::Box & | domain, | ||

| const amrex::Array4< amrex::Real const > & | z_nd, | ||

| const amrex::GpuArray< amrex::Real, AMREX_SPACEDIM > | dxInv, | ||

| int | bccomp, | ||

| const amrex::Real | time | ||

| ) |

amrex::GpuArray< Real, AMREX_SPACEDIM > dxInv

Definition: ERF_InitCustomPertVels_ParticleTests.H:17

AMREX_GPU_DEVICE AMREX_FORCE_INLINE amrex::Real Compute_h_xi_AtIface(const int &i, const int &j, const int &k, const amrex::GpuArray< amrex::Real, AMREX_SPACEDIM > &cellSizeInv, const amrex::Array4< const amrex::Real > &z_nd)

Definition: ERF_TerrainMetrics.H:117

AMREX_GPU_DEVICE AMREX_FORCE_INLINE amrex::Real Compute_h_zeta_AtIface(const int &i, const int &j, const int &k, const amrex::GpuArray< amrex::Real, AMREX_SPACEDIM > &cellSizeInv, const amrex::Array4< const amrex::Real > &z_nd)

Definition: ERF_TerrainMetrics.H:104

AMREX_GPU_DEVICE AMREX_FORCE_INLINE amrex::Real Compute_h_eta_AtIface(const int &i, const int &j, const int &k, const amrex::GpuArray< amrex::Real, AMREX_SPACEDIM > &cellSizeInv, const amrex::Array4< const amrex::Real > &z_nd)

Definition: ERF_TerrainMetrics.H:131

void impose_vertical_xvel_bcs(const amrex::Array4< amrex::Real > &dest_arr, const amrex::Box &bx, const amrex::Box &domain, const amrex::Array4< amrex::Real const > &z_nd, const amrex::GpuArray< amrex::Real, AMREX_SPACEDIM > dxInv, int bccomp, const amrex::Real time)

Definition: ERF_BoundaryConditionsXvel.cpp:193

Here is the call graph for this function:

◆ operator()()

| void ERFPhysBCFunct_u::operator() | ( | amrex::MultiFab & | mf, |

| amrex::MultiFab & | xvel, | ||

| amrex::MultiFab & | yvel, | ||

| amrex::IntVect const & | nghost, | ||

| const amrex::Real | time, | ||

| int | bccomp, | ||

| bool | do_fb | ||

| ) |

Member Data Documentation

◆ m_bc_extdir_vals

|

private |

◆ m_bc_neumann_vals

|

private |

◆ m_domain_bcs_type

|

private |

◆ m_domain_bcs_type_d

|

private |

◆ m_geom

|

private |

◆ m_lev

|

private |

◆ m_u_bc_data

|

private |

◆ m_use_real_bcs

|

private |

◆ m_z_phys_nd

|

private |

The documentation for this class was generated from the following files:

- Source/BoundaryConditions/ERF_PhysBCFunct.H

- Source/BoundaryConditions/ERF_BoundaryConditionsXvel.cpp

- Source/BoundaryConditions/ERF_PhysBCFunct.cpp