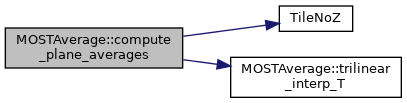

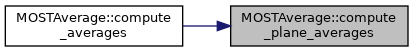

Function to compute average over a plane.

868 const auto & geom =

m_geom[lev];

883 Real d_fact_new, d_fact_old;

892 int klo =

m_geom[lev].Domain().smallEnd(2);

895 Gpu::DeviceVector<Real> pavg(plane_average.size(),

zero);

896 Real* plane_avg = pavg.data();

899 Vector<Real> denom(plane_average.size(),

zero);

900 Vector<Real> val_old(plane_average.size(),

zero);

907 Box domain = geom.Domain();

909 Array<int,AMREX_SPACEDIM> is_per = {0,0,0};

910 for (

int idim(0); idim < AMREX_SPACEDIM-1; ++idim) {

911 if (geom.isPeriodic(idim)) is_per[idim] = 1;

915 for (

int imf(0); imf < 4; ++imf) {

918 if (!fields[imf])

continue;

920 denom[imf] =

one / (

Real)ncell_plane[imf];

921 val_old[imf] = plane_average[imf]*d_fact_old;

924 #pragma omp parallel if (Gpu::notInLaunchRegion())

926 for (MFIter mfi(*fields[imf],

TileNoZ()); mfi.isValid(); ++mfi) {

927 Box vbx = mfi.validbox();

928 Box pbx = mfi.tilebox();

930 if (pbx.smallEnd(2) != klo) {

continue; }

937 IndexType ixt = averages[imf]->boxArray().ixType();

938 for (

int idim(0); idim < AMREX_SPACEDIM-1; ++idim) {

939 if ( ixt.nodeCentered(idim) && (pbx.bigEnd(idim) == vbx.bigEnd(idim)) ) {

940 int dom_hi = domain.bigEnd(idim)+1;

941 if (pbx.bigEnd(idim) <

dom_hi || is_per[idim]) {

947 auto mf_arr = (

m_rotate) ? rot_fields[imf]->const_array(mfi) :

948 fields[imf]->const_array(mfi);

951 const auto plo = geom.ProbLoArray();

952 const auto dxInv = geom.InvCellSizeArray();

953 const auto z_phys_arr = z_phys->const_array(mfi);

954 auto x_pos_arr = x_pos->array(mfi);

955 auto y_pos_arr = y_pos->array(mfi);

956 auto z_pos_arr = z_pos->array(mfi);

957 ParallelFor(Gpu::KernelInfo().setReduction(

true), pbx, [=]

958 AMREX_GPU_DEVICE(

int i,

int j,

int , Gpu::Handler

const& handler) noexcept

962 &interp, mf_arr, z_phys_arr, plo,

dxInv, 1);

964 Gpu::deviceReduceSum(&plane_avg[imf], val, handler);

967 auto k_arr = k_indx->const_array(mfi);

968 auto j_arr = j_indx ? j_indx->const_array(mfi) : Array4<const int> {};

969 auto i_arr = i_indx ? i_indx->const_array(mfi) : Array4<const int> {};

970 ParallelFor(Gpu::KernelInfo().setReduction(

true), pbx, [=]

971 AMREX_GPU_DEVICE(

int i,

int j,

int , Gpu::Handler

const& handler) noexcept

973 int mk = k_arr(i,j,0);

974 int mj = j_arr ? j_arr(i,j,0) : j;

975 int mi = i_arr ? i_arr(i,j,0) : i;

976 Real val = mf_arr(mi,mj,mk);

977 Gpu::deviceReduceSum(&plane_avg[imf], val, handler);

992 denom[iavg] =

one / (

Real)ncell_plane[iavg];

993 val_old[iavg] = plane_average[iavg]*d_fact_old;

996 #pragma omp parallel if (Gpu::notInLaunchRegion())

998 for (MFIter mfi(*fields[3],

TileNoZ()); mfi.isValid(); ++mfi)

1000 Box pbx = mfi.tilebox();

1002 if (pbx.smallEnd(2) != klo) {

continue; }

1004 pbx.makeSlab(2,klo);

1006 const Array4<Real const>& T_mf_arr = fields[2]->const_array(mfi);

1007 const Array4<Real const>& qv_mf_arr = fields[3]->const_array(mfi);

1008 const Array4<Real const>& qr_mf_arr = (fields[4]) ? fields[4]->const_array(mfi) :

1009 Array4<const Real> {};

1012 const auto plo =

m_geom[lev].ProbLoArray();

1013 const auto dxInv =

m_geom[lev].InvCellSizeArray();

1014 const auto z_phys_arr = z_phys->const_array(mfi);

1015 auto x_pos_arr = x_pos->array(mfi);

1016 auto y_pos_arr = y_pos->array(mfi);

1017 auto z_pos_arr = z_pos->array(mfi);

1018 ParallelFor(Gpu::KernelInfo().setReduction(

true), pbx, [=]

1019 AMREX_GPU_DEVICE(

int i,

int j,

int , Gpu::Handler

const& handler) noexcept

1024 &T_interp, T_mf_arr, z_phys_arr, plo,

dxInv, 1);

1026 &qv_interp, qv_mf_arr, z_phys_arr, plo,

dxInv, 1);

1032 &qr_interp, qr_mf_arr, z_phys_arr, plo,

dxInv, 1);

1038 Gpu::deviceReduceSum(&plane_avg[iavg], val, handler);

1041 auto k_arr = k_indx->const_array(mfi);

1042 auto j_arr = j_indx ? j_indx->const_array(mfi) : Array4<const int> {};

1043 auto i_arr = i_indx ? i_indx->const_array(mfi) : Array4<const int> {};

1044 ParallelFor(Gpu::KernelInfo().setReduction(

true), pbx, [=]

1045 AMREX_GPU_DEVICE(

int i,

int j,

int , Gpu::Handler

const& handler) noexcept

1047 int mk = k_arr(i,j,0);

1048 int mj = j_arr ? j_arr(i,j,0) : j;

1049 int mi = i_arr ? i_arr(i,j,0) : i;

1053 vfac =

one +

Real(0.61)*qv_mf_arr(mi,mj,mk) - qr_mf_arr(mi,mj,mk);

1057 const Real val = T_mf_arr(mi,mj,mk) *

vfac;

1058 Gpu::deviceReduceSum(&plane_avg[iavg], val, handler);

1066 denom[iavg] =

one / (

Real)ncell_plane[iavg];

1068 Gpu::copy(Gpu::deviceToDevice, pavg.begin() + 2, pavg.begin() + 3,

1069 pavg.begin() + iavg);

1082 denom[iavg] =

one / (

Real)ncell_plane[iavg];

1083 val_old[iavg] = plane_average[iavg]*d_fact_old;

1088 #pragma omp parallel if (Gpu::notInLaunchRegion())

1090 for (MFIter mfi(*fields[imf_cc],

TileNoZ()); mfi.isValid(); ++mfi)

1092 Box pbx = mfi.tilebox();

1094 if (pbx.smallEnd(2) != klo) {

continue; }

1096 pbx.makeSlab(2,klo);

1099 auto u_mf_arr = (

m_rotate) ? rot_fields[imf ]->const_array(mfi) :

1100 fields[imf ]->const_array(mfi);

1101 auto v_mf_arr = (

m_rotate) ? rot_fields[imf+1]->const_array(mfi) :

1102 fields[imf+1]->const_array(mfi);

1105 const auto plo =

m_geom[lev].ProbLoArray();

1106 const auto dxInv =

m_geom[lev].InvCellSizeArray();

1107 const auto z_phys_arr = z_phys->const_array(mfi);

1108 auto x_pos_arr = x_pos->array(mfi);

1109 auto y_pos_arr = y_pos->array(mfi);

1110 auto z_pos_arr = z_pos->array(mfi);

1111 ParallelFor(Gpu::KernelInfo().setReduction(

true), pbx, [=]

1112 AMREX_GPU_DEVICE(

int i,

int j,

int , Gpu::Handler

const& handler) noexcept

1117 &u_interp, u_mf_arr, z_phys_arr, plo,

dxInv, 1);

1119 &v_interp, v_mf_arr, z_phys_arr, plo,

dxInv, 1);

1120 const Real val = std::sqrt(u_interp*u_interp + v_interp*v_interp + Vsg*Vsg);

1121 Gpu::deviceReduceSum(&plane_avg[iavg], val, handler);

1124 auto k_arr = k_indx->const_array(mfi);

1125 auto j_arr = j_indx ? j_indx->const_array(mfi) : Array4<const int> {};

1126 auto i_arr = i_indx ? i_indx->const_array(mfi) : Array4<const int> {};

1127 ParallelFor(Gpu::KernelInfo().setReduction(

true), pbx, [=]

1128 AMREX_GPU_DEVICE(

int i,

int j,

int , Gpu::Handler

const& handler) noexcept

1130 int mk = k_arr(i,j,0);

1131 int mj = j_arr ? j_arr(i,j,0) : j;

1132 int mi = i_arr ? i_arr(i,j,0) : i;

1133 const Real u_val =

myhalf * (u_mf_arr(mi,mj,mk) + u_mf_arr(mi+1,mj ,mk));

1134 const Real v_val =

myhalf * (v_mf_arr(mi,mj,mk) + v_mf_arr(mi ,mj+1,mk));

1135 const Real val = std::sqrt(u_val*u_val + v_val*v_val + Vsg*Vsg);

1136 Gpu::deviceReduceSum(&plane_avg[iavg], val, handler);

1143 Gpu::copy(Gpu::deviceToHost, pavg.begin(), pavg.end(), plane_average.begin());

1144 ParallelDescriptor::ReduceRealSum(plane_average.data(),

static_cast<int>(plane_average.size()));

1147 for (

int iavg(0); iavg <

m_navg; ++iavg){

1148 plane_average[iavg] *= denom[iavg]*d_fact_new;

1149 plane_average[iavg] += val_old[iavg];

1150 averages[iavg]->setVal(plane_average[iavg]);

constexpr amrex::Real one

Definition: ERF_Constants.H:7

constexpr amrex::Real zero

Definition: ERF_Constants.H:6

constexpr amrex::Real myhalf

Definition: ERF_Constants.H:11

Real vfac

Definition: ERF_InitCustomPertVels_ABL.H:26

amrex::GpuArray< Real, AMREX_SPACEDIM > dxInv

Definition: ERF_InitCustomPertVels_ParticleTests.H:17

ParallelFor(bx, [=] AMREX_GPU_DEVICE(int i, int j, int k) noexcept { const Real *dx=geomdata.CellSize();const Real x=(i+myhalf) *dx[0];const Real y=(j+myhalf) *dx[1];const Real Omg=erf_vortex_Gaussian(x, y, xc, yc, R, beta, sigma);const Real deltaT=-(gamma - one)/(two *sigma *sigma) *Omg *Omg;const Real rho_norm=std::pow(one+deltaT, inv_gm1);const Real T=(one+deltaT) *T_inf;const Real p=std::pow(rho_norm, Gamma)/Gamma *rho_0 *a_inf *a_inf;const Real rho_theta=rho_0 *rho_norm *(T *std::pow(p_0/p, rdOcp));state_pert(i, j, k, RhoTheta_comp)=rho_theta - getRhoThetagivenP(p_hse(i, j, k));const Real r2d_xy=std::sqrt((x-xc) *(x-xc)+(y-yc) *(y-yc));state_pert(i, j, k, RhoScalar_comp)=fourth *(one+std::cos(PI *std::min(r2d_xy, R)/R));})

const auto & dom_hi

Definition: ERF_SetupVertDiff.H:2

amrex::Real Real

Definition: ERF_ShocInterface.H:19

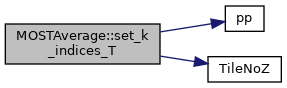

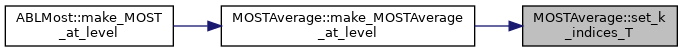

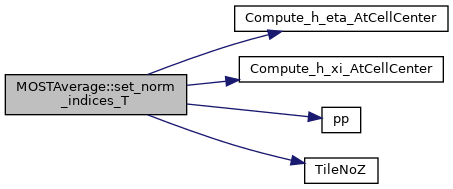

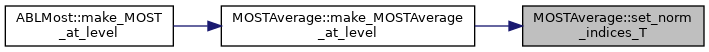

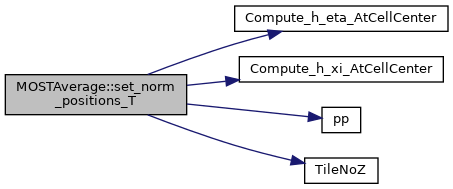

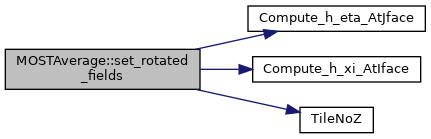

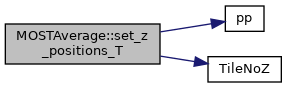

AMREX_FORCE_INLINE amrex::IntVect TileNoZ()

Definition: ERF_TileNoZ.H:11

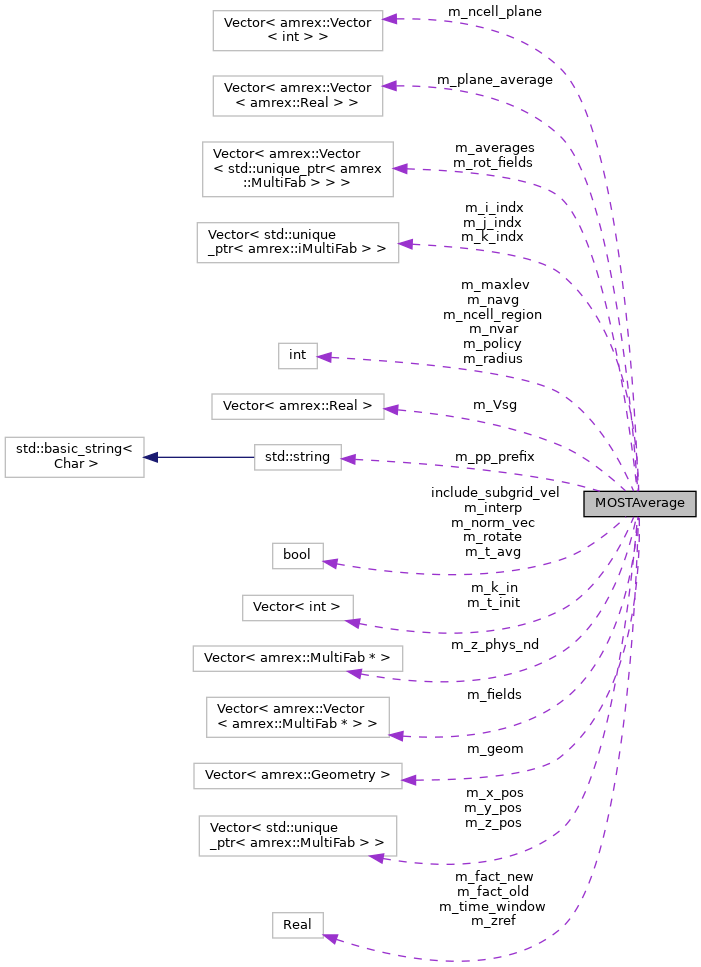

int m_navg

Definition: ERF_MOSTAverage.H:205

amrex::Vector< amrex::Vector< std::unique_ptr< amrex::MultiFab > > > m_averages

Definition: ERF_MOSTAverage.H:216

amrex::Vector< std::unique_ptr< amrex::MultiFab > > m_y_pos

Definition: ERF_MOSTAverage.H:211

amrex::Vector< std::unique_ptr< amrex::iMultiFab > > m_i_indx

Definition: ERF_MOSTAverage.H:213

amrex::Vector< amrex::MultiFab * > m_z_phys_nd

Definition: ERF_MOSTAverage.H:197

amrex::Vector< std::unique_ptr< amrex::MultiFab > > m_x_pos

Definition: ERF_MOSTAverage.H:210

amrex::Vector< amrex::Real > m_Vsg

Definition: ERF_MOSTAverage.H:245

amrex::Vector< amrex::Vector< std::unique_ptr< amrex::MultiFab > > > m_rot_fields

Definition: ERF_MOSTAverage.H:217

amrex::Vector< amrex::Vector< amrex::Real > > m_plane_average

Definition: ERF_MOSTAverage.H:222

amrex::Vector< std::unique_ptr< amrex::MultiFab > > m_z_pos

Definition: ERF_MOSTAverage.H:212

amrex::Vector< amrex::Vector< int > > m_ncell_plane

Definition: ERF_MOSTAverage.H:221

amrex::Real m_fact_new

Definition: ERF_MOSTAverage.H:240

amrex::Vector< std::unique_ptr< amrex::iMultiFab > > m_j_indx

Definition: ERF_MOSTAverage.H:214

amrex::Vector< amrex::Vector< amrex::MultiFab * > > m_fields

Definition: ERF_MOSTAverage.H:196

amrex::Real m_fact_old

Definition: ERF_MOSTAverage.H:240

bool m_interp

Definition: ERF_MOSTAverage.H:232

const amrex::Vector< amrex::Geometry > m_geom

Definition: ERF_MOSTAverage.H:195

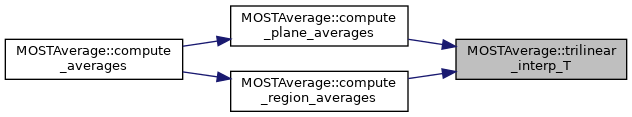

AMREX_GPU_HOST_DEVICE static AMREX_INLINE void trilinear_interp_T(const amrex::Real &xp, const amrex::Real &yp, const amrex::Real &zp, amrex::Real *interp_vals, amrex::Array4< amrex::Real const > const &interp_array, amrex::Array4< amrex::Real const > const &z_arr, const amrex::GpuArray< amrex::Real, AMREX_SPACEDIM > &plo, const amrex::GpuArray< amrex::Real, AMREX_SPACEDIM > &dxi, const int interp_comp)

Definition: ERF_MOSTAverage.H:126

amrex::Vector< std::unique_ptr< amrex::iMultiFab > > m_k_indx

Definition: ERF_MOSTAverage.H:215