233 Box domain =

m_geom.Domain();

235 Print () <<

"Noah-MP driver started at time step: " << nstep+1 << std::endl;

237 bool is_moist = (cons_in.nComp() >

RhoQ1_comp);

239 int klo = domain.smallEnd(2);

244 for (MFIter mfi(cons_in); mfi.isValid(); ++mfi, ++idb) {

246 Box bx = mfi.tilebox();

247 Box gbx = mfi.tilebox(IntVect(0,0,0),IntVect(1,1,0));

250 if (bx.smallEnd(2) != klo) {

continue; }

256 int i_lo = bx.smallEnd(0);

int i_hi = bx.bigEnd(0);

257 int j_lo = bx.smallEnd(1);

int j_hi = bx.bigEnd(1);

261 const Array4<const Real>& U_PHY = xvel_in.const_array(mfi);

262 const Array4<const Real>& V_PHY = yvel_in.const_array(mfi);

263 const Array4<const Real>& CONS = cons_in.const_array(mfi);

286 Box bx2d = makeSlab(bx,2,0);

287 FArrayBox tmp_u_phy(bx, 1, The_Pinned_Arena());

288 FArrayBox tmp_v_phy(bx, 1, The_Pinned_Arena());

289 FArrayBox tmp_t_phy(bx, 1, The_Pinned_Arena());

290 FArrayBox tmp_qv_curr(bx, 1, The_Pinned_Arena());

291 FArrayBox tmp_p8w(bx, 1, The_Pinned_Arena());

292 FArrayBox tmp_swdown(bx, 1, The_Pinned_Arena());

293 FArrayBox tmp_glw(bx, 1, The_Pinned_Arena());

294 FArrayBox tmp_coszen(bx, 1, The_Pinned_Arena());

295 FArrayBox tmp_hfx(bx, 1, The_Pinned_Arena());

296 FArrayBox tmp_lh(bx, 1, The_Pinned_Arena());

297 FArrayBox tmp_tau_ew(bx, 1, The_Pinned_Arena());

298 FArrayBox tmp_tau_ns(bx, 1, The_Pinned_Arena());

299 FArrayBox tmp_tsk(bx, 1, The_Pinned_Arena());

300 FArrayBox tmp_emiss(bx, 1, The_Pinned_Arena());

301 FArrayBox tmp_albsfcdir_vis(bx, 1, The_Pinned_Arena());

302 FArrayBox tmp_albsfcdir_nir(bx, 1, The_Pinned_Arena());

303 FArrayBox tmp_albsfcdif_vis(bx, 1, The_Pinned_Arena());

304 FArrayBox tmp_albsfcdif_nir(bx, 1, The_Pinned_Arena());

307 auto const& tmp_u_phy_arr = tmp_u_phy.array();

308 auto const& tmp_v_phy_arr = tmp_v_phy.array();

309 auto const& tmp_t_phy_arr = tmp_t_phy.array();

310 auto const& tmp_qv_curr_arr = tmp_qv_curr.array();

311 auto const& tmp_p8w_arr = tmp_p8w.array();

312 auto const& tmp_swdown_arr = tmp_swdown.array();

313 auto const& tmp_glw_arr = tmp_glw.array();

314 auto const& tmp_coszen_arr = tmp_coszen.array();

317 ParallelFor(bx, [=] AMREX_GPU_DEVICE (

int i,

int j,

int k) noexcept

320 tmp_u_phy_arr(i,j,0) =

myhalf*(U_PHY(i,j,k)+U_PHY(i+1,j ,k));

321 tmp_v_phy_arr(i,j,0) =

myhalf*(V_PHY(i,j,k)+V_PHY(i ,j+1,k));

322 tmp_t_phy_arr(i,j,0) =

getTgivenRandRTh(CONS(i,j,k,

Rho_comp),CONS(i,j,k,

RhoTheta_comp),

qv);

323 tmp_qv_curr_arr(i,j,0) =

qv;

325 tmp_swdown_arr(i,j,0) = SWDOWN(i,j,0);

326 tmp_glw_arr(i,j,0) = GLW(i,j,0);

327 tmp_coszen_arr(i,j,0) = COSZEN(i,j,0);

331 Gpu::streamSynchronize();

335 const auto& h_u_arr = tmp_u_phy.const_array();

336 const auto& h_v_arr = tmp_v_phy.const_array();

337 const auto& h_t_arr = tmp_t_phy.const_array();

338 const auto& h_qv_arr = tmp_qv_curr.const_array();

339 const auto& h_p8w_arr = tmp_p8w.const_array();

340 const auto& h_swdown_arr = tmp_swdown.const_array();

341 const auto& h_glw_arr = tmp_glw.const_array();

342 const auto& h_coszen_arr = tmp_coszen.const_array();

344 LoopOnCpu(bx, [&] (

int i,

int j,

int ) noexcept

346 noahmpio->U_PHY(i,1,j) = h_u_arr(i,j,0);

347 noahmpio->V_PHY(i,1,j) = h_v_arr(i,j,0);

348 noahmpio->T_PHY(i,1,j) = h_t_arr(i,j,0);

349 noahmpio->QV_CURR(i,1,j) = h_qv_arr(i,j,0);

350 noahmpio->P8W(i,1,j) = h_p8w_arr(i,j,0);

351 noahmpio->SWDOWN(i,j) = h_swdown_arr(i,j,0);

352 noahmpio->GLW(i,j) = h_glw_arr(i,j,0);

353 noahmpio->COSZEN(i,j) = h_coszen_arr(i,j,0);

358 noahmpio->itimestep = nstep+1;

359 noahmpio->DriverMain();

362 auto h_hfx_arr = tmp_hfx.array();

363 auto h_lh_arr = tmp_lh.array();

364 auto h_tau_ew_arr = tmp_tau_ew.array();

365 auto h_tau_ns_arr = tmp_tau_ns.array();

366 auto h_tsk_arr = tmp_tsk.array();

367 auto h_emiss_arr = tmp_emiss.array();

368 auto h_albsfcdir_vis_arr = tmp_albsfcdir_vis.array();

369 auto h_albsfcdir_nir_arr = tmp_albsfcdir_nir.array();

370 auto h_albsfcdif_vis_arr = tmp_albsfcdif_vis.array();

371 auto h_albsfcdif_nir_arr = tmp_albsfcdif_nir.array();

373 LoopOnCpu(bx, [&] (

int i,

int j,

int ) noexcept

375 h_hfx_arr(i,j,0) = noahmpio->HFX(i,j);

376 h_lh_arr(i,j,0) = noahmpio->LH(i,j);

377 h_tau_ew_arr(i,j,0) = noahmpio->TAU_EW(i,j);

378 h_tau_ns_arr(i,j,0) = noahmpio->TAU_NS(i,j);

379 h_tsk_arr(i,j,0) = noahmpio->TSK(i,j);

380 h_emiss_arr(i,j,0) = noahmpio->EMISS(i,j);

381 h_albsfcdir_vis_arr(i,j,0) = noahmpio->ALBSFCDIRXY(i,1,j);

382 h_albsfcdir_nir_arr(i,j,0) = noahmpio->ALBSFCDIRXY(i,2,j);

383 h_albsfcdif_vis_arr(i,j,0) = noahmpio->ALBSFCDIFXY(i,1,j);

384 h_albsfcdif_nir_arr(i,j,0) = noahmpio->ALBSFCDIFXY(i,2,j);

388 auto const& tmp_hfx_arr = tmp_hfx.array();

389 auto const& tmp_lh_arr = tmp_lh.array();

390 auto const& tmp_tau_ew_arr = tmp_tau_ew.array();

391 auto const& tmp_tau_ns_arr = tmp_tau_ns.array();

392 auto const& tmp_tsk_arr = tmp_tsk.array();

393 auto const& tmp_emiss_arr = tmp_emiss.array();

394 auto const& tmp_albsfcdir_vis_arr = tmp_albsfcdir_vis.array();

395 auto const& tmp_albsfcdir_nir_arr = tmp_albsfcdir_nir.array();

396 auto const& tmp_albsfcdif_vis_arr = tmp_albsfcdif_vis.array();

397 auto const& tmp_albsfcdif_nir_arr = tmp_albsfcdif_nir.array();

401 ParallelFor(gbx, [=] AMREX_GPU_DEVICE (

int i,

int j,

int k) noexcept

404 int ii = std::min(std::max(i,i_lo),i_hi);

405 int jj = std::min(std::max(j,j_lo),j_hi);

408 t_flux_arr(i,j,k) = tmp_hfx_arr(ii,jj,0)/(CONS(ii,jj,k,

Rho_comp)*

Cp_d);

409 q_flux_arr(i,j,k) = tmp_lh_arr(ii,jj,0)/(CONS(ii,jj,k,

Rho_comp)*

L_v);

414 tau13_arr(i,j,k) = tmp_tau_ew_arr(ii,jj,0)/CONS(ii,jj,k,

Rho_comp);

415 tau23_arr(i,j,k) = tmp_tau_ns_arr(ii,jj,0)/CONS(ii,jj,k,

Rho_comp);

418 TSK(i,j,0) = tmp_tsk_arr(ii,jj,0);

419 EMISS(i,j,0) = tmp_emiss_arr(ii,jj,0);

420 ALBSFCDIR_VIS(i,j,0) = tmp_albsfcdir_vis_arr(ii,jj,0);

421 ALBSFCDIR_NIR(i,j,0) = tmp_albsfcdir_nir_arr(ii,jj,0);

422 ALBSFCDIF_VIS(i,j,0) = tmp_albsfcdif_vis_arr(ii,jj,0);

423 ALBSFCDIF_NIR(i,j,0) = tmp_albsfcdif_nir_arr(ii,jj,0);

431 Print () <<

"Noah-MP driver completed" << std::endl;

constexpr amrex::Real Cp_d

Definition: ERF_Constants.H:23

constexpr amrex::Real zero

Definition: ERF_Constants.H:6

constexpr amrex::Real myhalf

Definition: ERF_Constants.H:11

constexpr amrex::Real L_v

Definition: ERF_Constants.H:27

AMREX_GPU_HOST_DEVICE AMREX_FORCE_INLINE amrex::Real getTgivenRandRTh(const amrex::Real rho, const amrex::Real rhotheta, const amrex::Real qv=amrex::Real(0))

Definition: ERF_EOS.H:46

AMREX_GPU_HOST_DEVICE AMREX_FORCE_INLINE amrex::Real getPgivenRTh(const amrex::Real rhotheta, const amrex::Real qv=amrex::Real(0))

Definition: ERF_EOS.H:81

#define Rho_comp

Definition: ERF_IndexDefines.H:36

#define RhoTheta_comp

Definition: ERF_IndexDefines.H:37

#define RhoQ1_comp

Definition: ERF_IndexDefines.H:42

ParallelFor(bx, [=] AMREX_GPU_DEVICE(int i, int j, int k) noexcept { const Real *dx=geomdata.CellSize();const Real x=(i+myhalf) *dx[0];const Real y=(j+myhalf) *dx[1];const Real Omg=erf_vortex_Gaussian(x, y, xc, yc, R, beta, sigma);const Real deltaT=-(gamma - one)/(two *sigma *sigma) *Omg *Omg;const Real rho_norm=std::pow(one+deltaT, inv_gm1);const Real T=(one+deltaT) *T_inf;const Real p=std::pow(rho_norm, Gamma)/Gamma *rho_0 *a_inf *a_inf;const Real rho_theta=rho_0 *rho_norm *(T *std::pow(p_0/p, rdOcp));state_pert(i, j, k, RhoTheta_comp)=rho_theta - getRhoThetagivenP(p_hse(i, j, k));const Real r2d_xy=std::sqrt((x-xc) *(x-xc)+(y-yc) *(y-yc));state_pert(i, j, k, RhoScalar_comp)=fourth *(one+std::cos(PI *std::min(r2d_xy, R)/R));})

amrex::Real Real

Definition: ERF_ShocInterface.H:19

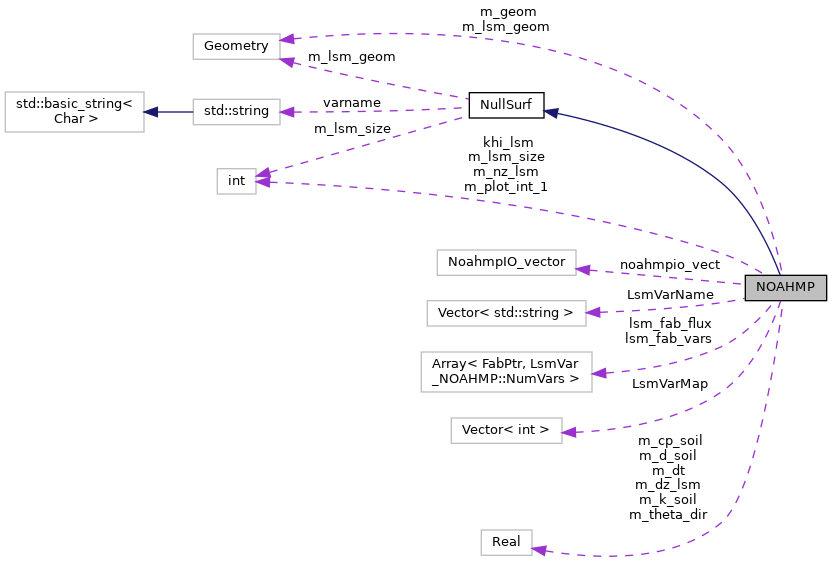

amrex::Array< FabPtr, LsmFlux_NOAHMP::NumVars > lsm_fab_flux

Definition: ERF_NOAHMP.H:209

NoahmpIO_vector noahmpio_vect

Definition: ERF_NOAHMP.H:212

amrex::Array< FabPtr, LsmData_NOAHMP::NumVars > lsm_fab_data

Definition: ERF_NOAHMP.H:206

amrex::Geometry m_geom

Definition: ERF_NOAHMP.H:188

@ sw_flux_dn

Definition: ERF_NOAHMP.H:32

@ sfc_emis

Definition: ERF_NOAHMP.H:26

@ sfc_alb_dir_vis

Definition: ERF_NOAHMP.H:27

@ sfc_alb_dif_nir

Definition: ERF_NOAHMP.H:30

@ lw_flux_dn

Definition: ERF_NOAHMP.H:37

@ cos_zenith_angle

Definition: ERF_NOAHMP.H:31

@ t_sfc

Definition: ERF_NOAHMP.H:25

@ sfc_alb_dir_nir

Definition: ERF_NOAHMP.H:28

@ sfc_alb_dif_vis

Definition: ERF_NOAHMP.H:29

@ t_flux

Definition: ERF_NOAHMP.H:45

@ tau13

Definition: ERF_NOAHMP.H:47

@ q_flux

Definition: ERF_NOAHMP.H:46

@ NumVars

Definition: ERF_NOAHMP.H:49

@ tau23

Definition: ERF_NOAHMP.H:48

@ qv

Definition: ERF_Kessler.H:28

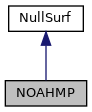

Public Member Functions inherited from NullSurf

Public Member Functions inherited from NullSurf