#include "ERF_Diffusion.H"#include "ERF_EddyViscosity.H"#include "ERF_SolveTridiag.H"#include "ERF_GetRhoAlpha.H"#include "ERF_GetRhoAlphaForFaces.H"#include "ERF_SetupVertDiff.H"

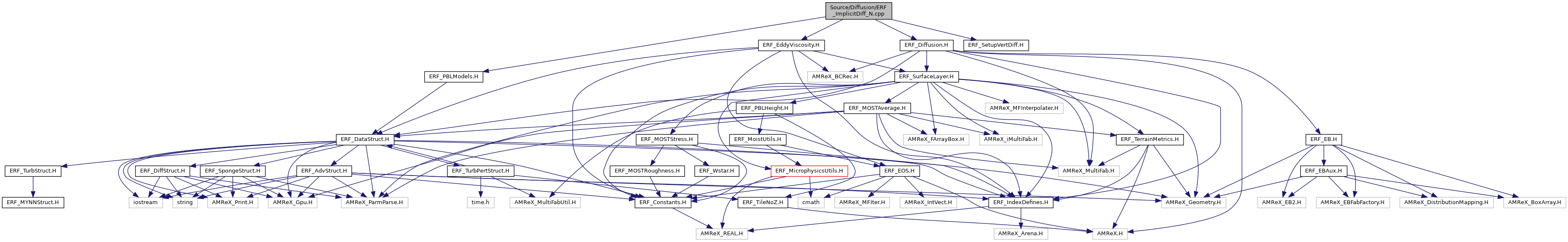

Include dependency graph for ERF_ImplicitDiff_N.cpp:

Macros | |

| #define | INSTANTIATE_IMPLICIT_DIFF_FOR_MOM_LU(STAGDIR) |

Functions | |

| void | ImplicitDiffForStateLU_N (const Box &bx, const Box &domain, const int level, const int n, const Real dt, const GpuArray< Real, AMREX_SPACEDIM *2 > &bc_neumann_vals, const Array4< Real > &cell_data, const GpuArray< Real, AMREX_SPACEDIM > &cellSizeInv, const Array4< const Real > &scalar_zflux, const Array4< const Real > &mu_turb, const SolverChoice &solverChoice, const BCRec *bc_ptr, const bool use_SurfLayer, const Real implicit_fac) |

| template<int stagdir> | |

| void | ImplicitDiffForMomLU_N (const Box &bx, const Box &, const int level, const Real dt, const Array4< const Real > &cell_data, const Array4< Real > &face_data, const Array4< const Real > &tau, const Array4< const Real > &tau_corr, const GpuArray< Real, AMREX_SPACEDIM > &cellSizeInv, const Array4< const Real > &mu_turb, const SolverChoice &solverChoice, const BCRec *bc_ptr, const bool use_SurfLayer, const Real implicit_fac) |

Macro Definition Documentation

◆ INSTANTIATE_IMPLICIT_DIFF_FOR_MOM_LU

| #define INSTANTIATE_IMPLICIT_DIFF_FOR_MOM_LU | ( | STAGDIR | ) |

Value:

template void ImplicitDiffForMomLU_N<STAGDIR> ( \

const Box&, \

const Box&, \

const int, \

const Real, \

const Array4<const Real>&, \

const Array4< Real>&, \

const Array4<const Real>&, \

const Array4<const Real>&, \

const GpuArray<Real, AMREX_SPACEDIM>&, \

const Array4<const Real>&, \

const SolverChoice&, \

const BCRec*, \

const bool, \

const Real);

Definition: ERF_DataStruct.H:130

Function Documentation

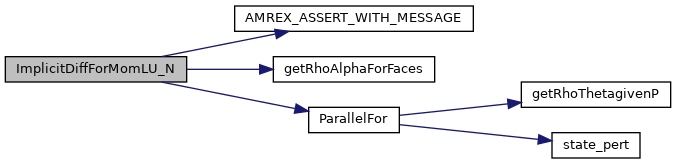

◆ ImplicitDiffForMomLU_N()

template<int stagdir>

| void ImplicitDiffForMomLU_N | ( | const Box & | bx, |

| const Box & | , | ||

| const int | level, | ||

| const Real | dt, | ||

| const Array4< const Real > & | cell_data, | ||

| const Array4< Real > & | face_data, | ||

| const Array4< const Real > & | tau, | ||

| const Array4< const Real > & | tau_corr, | ||

| const GpuArray< Real, AMREX_SPACEDIM > & | cellSizeInv, | ||

| const Array4< const Real > & | mu_turb, | ||

| const SolverChoice & | solverChoice, | ||

| const BCRec * | bc_ptr, | ||

| const bool | use_SurfLayer, | ||

| const Real | implicit_fac | ||

| ) |

Function for computing the implicit contribution to the vertical diffusion of momentum, with a uniform grid and no terrain.

This function (explicitly instantiated below) handles staggering in x, y, or z through the template parameter, stagdir.

- Parameters

-

[in] bx cell-centered box to loop over [in] level AMR level [in] domain box of the whole domain [in] dt time step [in] cell_data conserved cell-centered rho [in,out] face_data conserved momentum [in] tau_corr stress contribution to momentum that will be corrected by the implicit solve [in] cellSizeInv inverse cell size array [in] mu_turb turbulent viscosity [in] solverChoice container of parameters [in] bc_ptr container with boundary conditions [in] use_SurfLayer whether we have turned on subgrid diffusion [in] implicit_fac if 1 then fully implicit; if 0 then fully explicit

@ ConstantAlpha

AMREX_GPU_HOST_DEVICE AMREX_FORCE_INLINE void getRhoAlphaForFaces(int i, int j, int k, int ioff, int joff, amrex::Real &rhoAlpha_lo, amrex::Real &rhoAlpha_hi, const amrex::Array4< const amrex::Real > &cell_data, const amrex::Array4< const amrex::Real > &mu_turb, const amrex::Real mu_eff, bool l_consA, bool l_turb)

Definition: ERF_GetRhoAlphaForFaces.H:5

ParallelFor(bx, [=] AMREX_GPU_DEVICE(int i, int j, int k) noexcept { const Real *dx=geomdata.CellSize();const Real x=(i+myhalf) *dx[0];const Real y=(j+myhalf) *dx[1];const Real Omg=erf_vortex_Gaussian(x, y, xc, yc, R, beta, sigma);const Real deltaT=-(gamma - one)/(two *sigma *sigma) *Omg *Omg;const Real rho_norm=std::pow(one+deltaT, inv_gm1);const Real T=(one+deltaT) *T_inf;const Real p=std::pow(rho_norm, Gamma)/Gamma *rho_0 *a_inf *a_inf;const Real rho_theta=rho_0 *rho_norm *(T *std::pow(p_0/p, rdOcp));state_pert(i, j, k, RhoTheta_comp)=rho_theta - getRhoThetagivenP(p_hse(i, j, k));const Real r2d_xy=std::sqrt((x-xc) *(x-xc)+(y-yc) *(y-yc));state_pert(i, j, k, RhoScalar_comp)=fourth *(one+std::cos(PI *std::min(r2d_xy, R)/R));})

AMREX_ASSERT_WITH_MESSAGE(wbar_cutoff_min > wbar_cutoff_max, "ERROR: wbar_cutoff_min < wbar_cutoff_max")

Definition: ERF_DiffStruct.H:19

Definition: ERF_TurbStruct.H:66

Here is the call graph for this function:

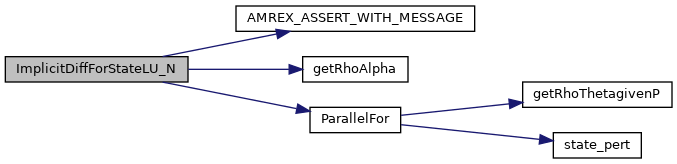

◆ ImplicitDiffForStateLU_N()

| void ImplicitDiffForStateLU_N | ( | const Box & | bx, |

| const Box & | domain, | ||

| const int | level, | ||

| const int | n, | ||

| const Real | dt, | ||

| const GpuArray< Real, AMREX_SPACEDIM *2 > & | bc_neumann_vals, | ||

| const Array4< Real > & | cell_data, | ||

| const GpuArray< Real, AMREX_SPACEDIM > & | cellSizeInv, | ||

| const Array4< const Real > & | scalar_zflux, | ||

| const Array4< const Real > & | mu_turb, | ||

| const SolverChoice & | solverChoice, | ||

| const BCRec * | bc_ptr, | ||

| const bool | use_SurfLayer, | ||

| const Real | implicit_fac | ||

| ) |

Function for computing the implicit contribution to the vertical diffusion of theta, with a uniform grid, no terrain, and LU decomposition.

- Parameters

-

[in] bx cell-centered box to loop over [in] level AMR level [in] domain box of the whole domain [in] dt time step [in] bc_neumann_vals values of derivatives if bc_type == Neumann [in,out] cell_data conserved cell-centered rho, rho theta [in] cellSizeInv inverse cell size array [in,out] hfx_z heat flux in z-dir [in] mu_turb turbulent viscosity [in] solverChoice container of parameters [in] bc_ptr container with boundary conditions [in] use_SurfLayer whether we have turned on subgrid diffusion [in] implicit_fac if 1 then fully implicit; if 0 then fully explicit

50 const int prim_scal_index = (qty_index >= RhoScalar_comp && qty_index < RhoScalar_comp+NSCALARS) ? PrimScalar_comp : prim_index;

AMREX_GPU_HOST_DEVICE AMREX_FORCE_INLINE void getRhoAlpha(int i, int j, int k, amrex::Real &rhoAlpha_lo, amrex::Real &rhoAlpha_hi, const amrex::Array4< const amrex::Real > &cell_data, const amrex::Array4< const amrex::Real > &mu_turb, const amrex::Real *d_alpha_eff, const int *d_eddy_diff_idz, int prim_index, int prim_scal_index, bool l_consA, bool l_turb)

Definition: ERF_GetRhoAlpha.H:5

Here is the call graph for this function: